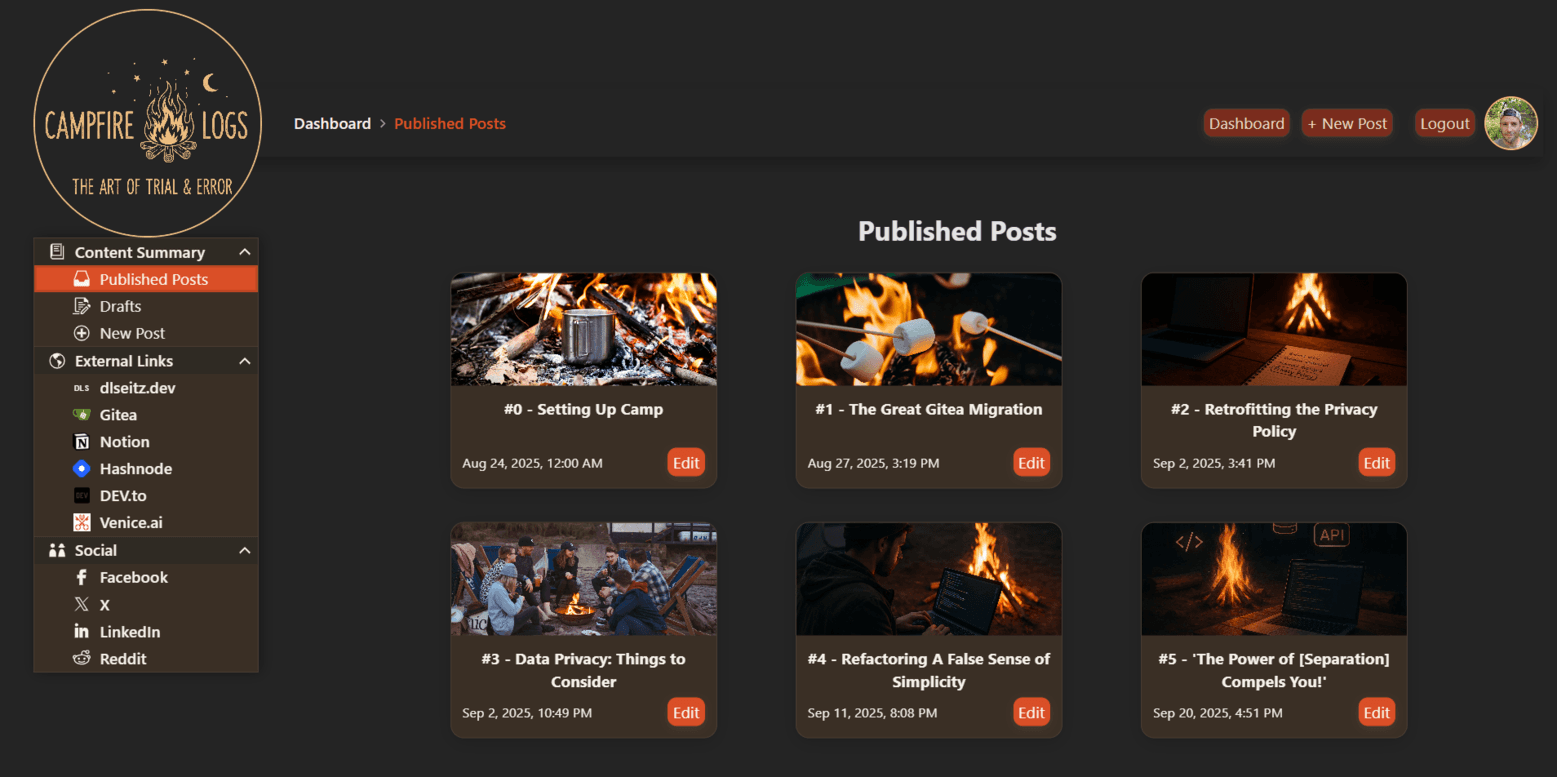

#5 - 'The Power of [Separation] Compels You!'

![#5 - 'The Power of [Separation] Compels You!'](/_next/image?url=https%3A%2F%2Fcdn.hashnode.com%2Fres%2Fhashnode%2Fimage%2Fupload%2Fv1758406315553%2F972bb96e-57ef-4814-9d2e-82c1d29e8168.webp&w=3840&q=75)

I’m Derek, a full-stack developer on a mission to build real-world projects the hard way—by breaking things, figuring out why, and then fixing them better. Fresh out of college at the ripe ol’ age of 38 (I took the scenic route) and based in rural Arkansas, I’m carving out my own path in tech, one code snippet at a time. I build websites, backend systems, and—coming soon—my own blogging platform from scratch (don’t worry, you’ll get to watch), sharing the wins and the “uh-oh” moments along the way. If you’re into practical tech insights sprinkled with a bit of trial and error, you’re in the right place.

Hey there, and welcome back to Campfire Logs: The Art of Trial & Error. In my last log, #4 - Refactoring a False Sense of Simplicity, I introduced you to the front end demo I recently refactored to be more modular and accessibility-friendly. Today, we are going to talk more about that same refactor, but we are going to focus on some of the enhancements I made to the existing features and the new features added for improved interactivity. We are also going to discuss how separating the data, presentation, and logic layers to the demo improved maintainability of the codebase by decoupling its components.

For anyone short on time, or that just want to get to the point, there’s a TL;DR section with links to the live demo and its repo at the end.

For everyone else, grab some coffee or marshmallows (or hot dogs) and a stick—and let’s get to it!

Credit Where Credit is Due

I’ve mentioned in some of my previous logs how important developing with integrity is to me. In short, what that means for me is developing applications and websites in an honest, transparent, and accessible manner. This includes ensuring proper credit and attributions are made when creative works of others are included in what I build.

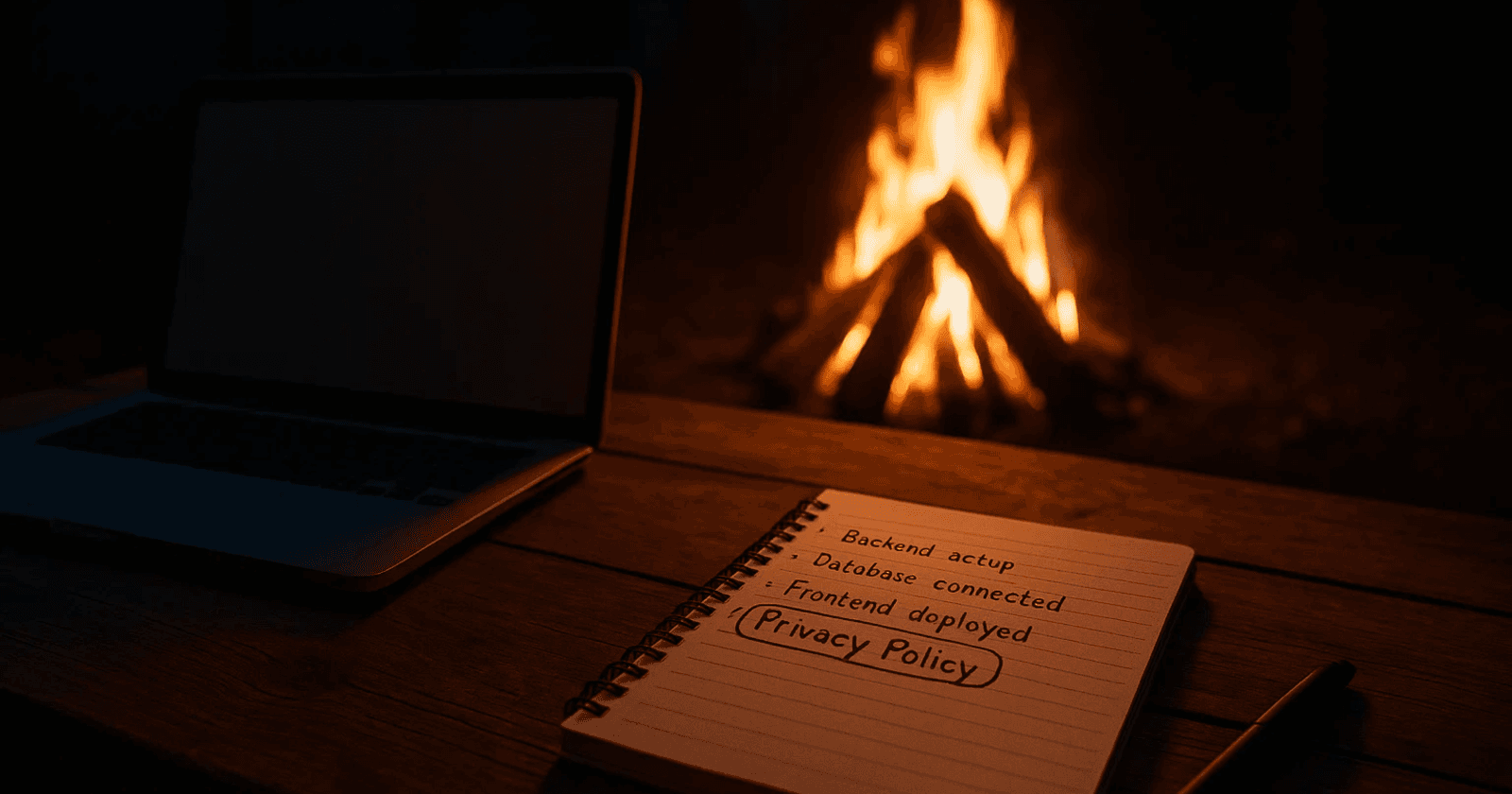

I also said in the last log that the demo I refactored was originally a course project. What I didn't get into was that the refactor involved sourcing all new images to avoid copyright violations with the materials provided in the class. In other words, because the refactor project wasn’t part of the course project, instead being an enhanced demonstration in my portfolio, things were edging a little too far away from being considered “fair use” in the eyes of copyright law. So, I nipped it in the bud to avoid potential headaches (and wallet-aches) down the road. This meant I had a whole different problem to worry about, though: figuring out how to give credit where credit was due.

The Credits & Attributions Page

Because the presentation of the demo needed to emulate an eCommerce front end, the images I used throughout couldn’t be cluttered with attribution links—it would have killed the whole vibe. Instead, the solution I chose was to create a dedicated page, linking it in the copyright information at the bottom of the footer. The page consists of “credits”, each linking the image to its creator, the platform that hosts it, and the license under which I am allowed to use it. I knew, however, that I needed a better way of organizing that data than simply statically coding it into the Nunjucks template for the page, especially if I wanted to connect it elsewhere in the demo down the road.

Because I use 11ty as my static site generator (SSG) with Nunjucks for templating, using a data file that holds an array of all the credit objects seemed like the way to go. At build time, when the SSG creates the files to be rendered by the browser, a for-loop in the Nunjucks template for the page could simply iterate over the array, injecting the data into the HTML build artifact, allowing 11ty to then do all the work.

Now, I know what you’re thinking. “Each credit is still statically coded into the HTML.” Well, yes and no. Yes, the HTML served to the browser appears as a static list of credits, but preventing that was never the point here. The point was to create a modular, more easily maintained system that allows me to make changes in one place that will propagate throughout the project wherever that data is rendered. By separating the data layer (credits and attributions) from the presentation layer (HTML and CSS) and the logic layer (Nunjucks conditionals and JavaScript), I’m letting a tool I’m already using anyway handle more of what it’s designed to do.

The Ripple Effect

The Credits & Attributions page was only the start. Other pages also had various sets of data that I needed to separate from everything else.

At this point, the homepage had a “Featured Items” slideshow that cycled through various images, their descriptions, and their price, all hardcoded into the HTML. The Gallery page also used those same images and information in its carousel of categorized products, but the data here was hardcoded into the JS script that controlled the display of the carousel.

Not very efficient.

So, I decided to do the same thing as before, creating a data file to hold all the properties of each product in an array of standardized product objects. This allowed me to use Nunjucks templating again for the “Featured Items” slideshow for quick loading of the homepage while using JavaScript to dynamically populate and sort the product cards in the Gallery page’s carousel for enhanced interactivity (e.g., infinite scroll in the carousel, expanded descriptions on focus, etc.).

You also might be saying, “Why not include the credit and attribution data with the product data and just use one data file?” That’s a great question. I could have for the purpose of this demo, but if there were a backend to this project and a relational database like PostgreSQL attached to it, I would still have both sets of data in separate tables in the database. By using a foreign key between related records in the two separate tables, I could avoid “God Objects,” or objects that become incredibly hard to manage because they have too many responsibilities or hold too much information, causing problems down the road. The same thing applies to the data structures I created for this demo.

Connecting with the [Fictional] Community

Because of the dynamic interactivity I developed for the pages I’ve discussed so far, I was left scratching my head looking at the Community Events page of the demo. It was a stark contrast to the rest of the site now. Frankly put… it was ugly and boring. Also, the mock-events I had created for the original demo were statically coded into the HTML like the other pages had been. I simply couldn’t leave it like this.

Now, this is where if you try to say scope creep had definitely found some footing, I might be inclined to agree with you, at least to a small degree. But looking at the tools available to me while trying to come up with a way to add some pizazz to the otherwise bland Google Calendar iframe and static events on the page, lightbulbs in my head just started flashing. Think Paris Hilton at the 2005 Teen Choice Awards (yeah, I said it).

Since I use Zoho as the email provider for my custom domain, I figured, “How fun would it be to use Zoho Calendar and the Zoho Calendar API for this page?” This would provide a “single source of truth” for the events displayed on the page. All I had to do was figure out how the API (application programming interface) worked—that is, what was needed in the HTTP request to the API endpoint and what data would be returned in the response payload.

The Grunt Work of Community Engagement

Let me go ahead and say, this whole thing seemed a lot easier in my head than it was in reality. The process wasn’t hard, but it was less intuitive than I expected—a perception that likely stemmed from my specific use case and my limited experience with third-party APIs. This was primarily due to two things: Zoho’s documentation not being quite as clear as I thought it could have been, and the need for separate scripts for retrieving the event data at build time and rendering the events carousel dynamically at runtime. No big deal, though; I’ve tackled hairier situations.

The biggest concerns for the API script were checking off the following:

Determine the start and end dates of the current month at build time (more on that in a bit)

Check the stored OAuth 2.0 API token needed to retrieve data from the Zoho Calendar API, using the refresh token to request a new one if it already expired

Fetch the events information for the dummy calendar in Zoho using the calendar ID, calculated dates, and OAuth 2.0 API token

Normalize the response payload, extracting the data I needed to render the event cards

Make a second API call to retrieve the event descriptions for the returned events

Store the normalized data in an export module that could be converted to JSON when 11ty creates the build artifacts

For me, the most frustrating part of all of this was normalizing the dates and times returned by the API response. It didn’t really occur to me at first that “all-day” and time-specific events would return datetime properties in different formats. Honestly, it was something I didn’t even catch until after I wrote the client-side JavaScript to generate the event cards. It took me longer than I’d like to admit getting to the bottom of why only the all-day event cards wouldn’t populate with a date. Fortunately, though, the API response for each event included an isAllDay Boolean value which made writing conditional statements for how to parse each event’s datetime values very straightforward.

Really the rest of the events page was smooth sailing. I had already written the logic for the products carousel on the Gallery page, so it was easy to write an adapted version for the events data. Also, since I output the normalized event data to an export module, I used Nunjucks and 11ty to convert the data into a JSON file during the build process. This allowed the events carousel script to make a simple, local API call, again keeping the data separate from my logic.

The last trick I had up my sleeve is what I thought to be most clever (but maybe it wasn’t… you be the judge). I mentioned that the first thing the script that makes the API call to Zoho needed to do was determine the current month, specifically the first and last dates of the month, to specify which events should be returned. Since this script is run by 11ty at build time (not client-side in the browser), by using a simple cron job on my web server to rebuild the demo at 12:01 am on the first of every month, and since I’ve set up recurring seasonal events throughout the year in the Zoho dummy calendar, the displayed events in the demo will fit the month in which the demo is viewed without me needing to manually update anything at all. How fun is that?

What I Learned from All of This

Sure, refactoring a difficult-to-maintain codebase into something more manageable and organized turned into a few new features and a lot of work I didn’t anticipate at first. To me, though, it was well worth the effort I spent on it. I was incredibly proud of the original demo when I submitted it as my course project a year ago. After all, it was my first website that I quite literally drove myself insane over trying to get right. Even if it’s still not “perfect”, I’m incredibly proud of what I managed to accomplish in refactoring it.

There’s something inspirational in being able to look back to see just how far you’ve grown in a year’s time. You realize that little by little, each and every bump in the road along the way adds up to considerable improvement in skill if you just stick with it. You really start to see the forest from the trees, as they say.

TL;DR

I refactored a monolithic front-end demo into a modular, maintainable system using 11ty, Nunjucks, and JS. I separated data (credits, products, events) from presentation and logic, built a dedicated Credits & Attributions page, and made the product and event pages dynamic and interactive. The volume of work was the result of a ripple effect from changes that were made, but each change aligned with the refactor’s goals of modularity, maintainability, ethical attribution, and improved demonstration of my growth as a developer. Overall, the project was challenging, rewarding, and a clear reflection of growth over the past year.

Click here to check out the live demo.

Click here to check out the source code for this project.

Before You Go

As always, thank you so much for taking the time to read through some of my struggles and wins in full-stack development. I encourage you all to leave a comment telling me about your own experiences—maybe you’ve had similar trouble with third-party APIs, or maybe you have some tips on how I could have approached things differently. I look forward to reading what you have to say!

In the next log (#6), I’m going to share with you the progress I’ve been making on my blogging platform project. I’ve gotten started on building the dashboard using React.js and the KendoReact component library, so check back soon for #6 to drop!